MHRA Update: AI/ML Transparency Rules for Clinical Trials

MHRA now requires AI/ML transparency in clinical trials, mandating disclosure of AI systems, validation methods, and governance in GCP inspection dossiers.

share this

1.0. Introduction: MHRA Makes AI Inspection-Relevant in Clinical Trials

The UK Medicines and Healthcare products Regulatory Agency (MHRA) updated its Good Clinical Practice (GCP) inspection dossier requirements to explicitly include comprehensive details about artificial intelligence and machine learning (AI/ML) in clinical trials (@ Julia Appelskog, AI/ML in Clinical Trials)

This regulatory change marks a new era, AI is no longer a peripheral innovation in clinical operations; it is now inspection-relevant infrastructure. Sponsors must submit detailed documentation about any AI/ML systems used across the trial lifecycle, from operations and monitoring to analytics and decision support. This development places AI governance on par with traditional quality systems and risk management processes.

2.0. What the Updated MHRA Regulation Requires

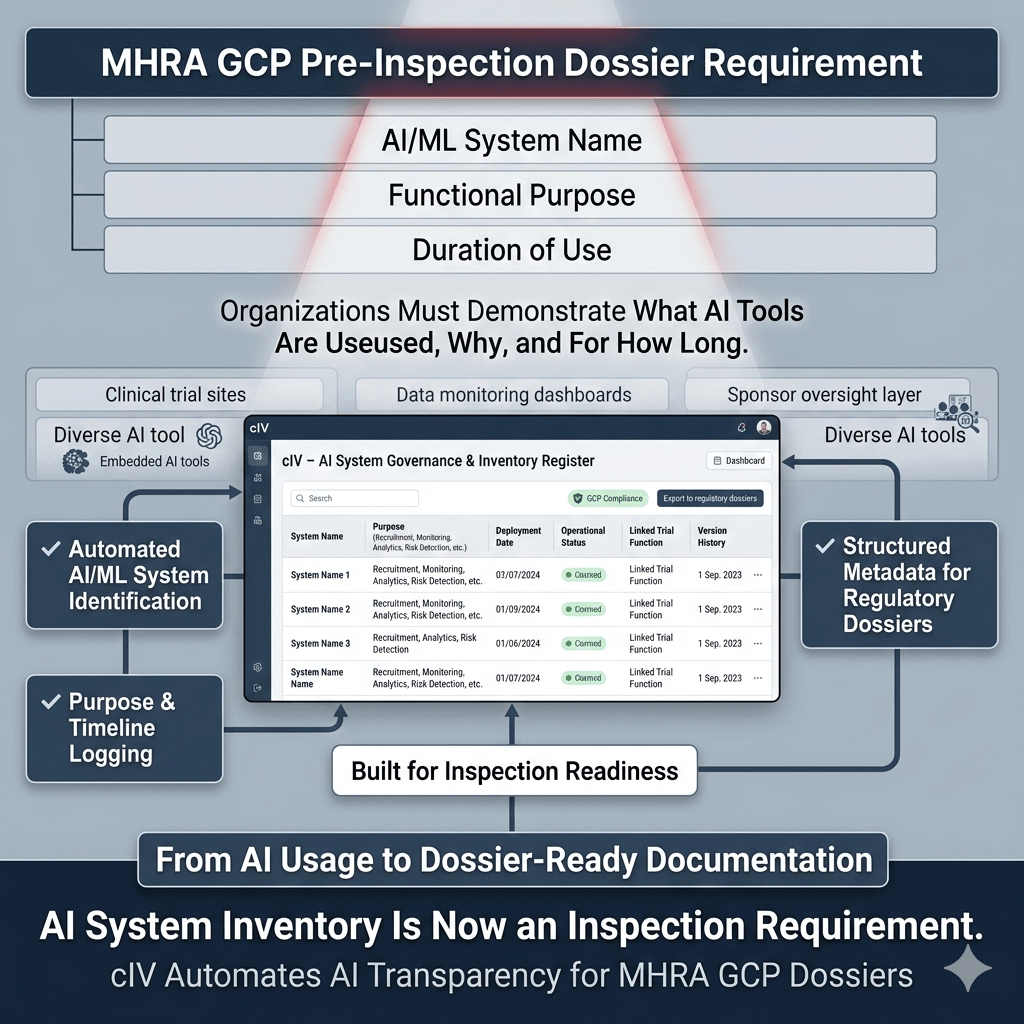

To be inspection-ready under the updated MHRA framework, organizations must include three key categories of AI/ML information in their pre-inspection dossiers:

2.1. AI/ML System Identification — “Know What You’re Using”

Sponsors must list:

- The names of all AI/ML systems or tools deployed in the conduct, oversight, or delivery of the clinical trial

- The specific purpose of each system, such as recruitment optimization, monitoring, risk detection, or data analytics

- The duration of use, i.e., how long each tool has been operational in the context of the trial

This requirement ensures regulators have a transparent inventory of all algorithmically enabled components that could influence trial conduct or data integrity.

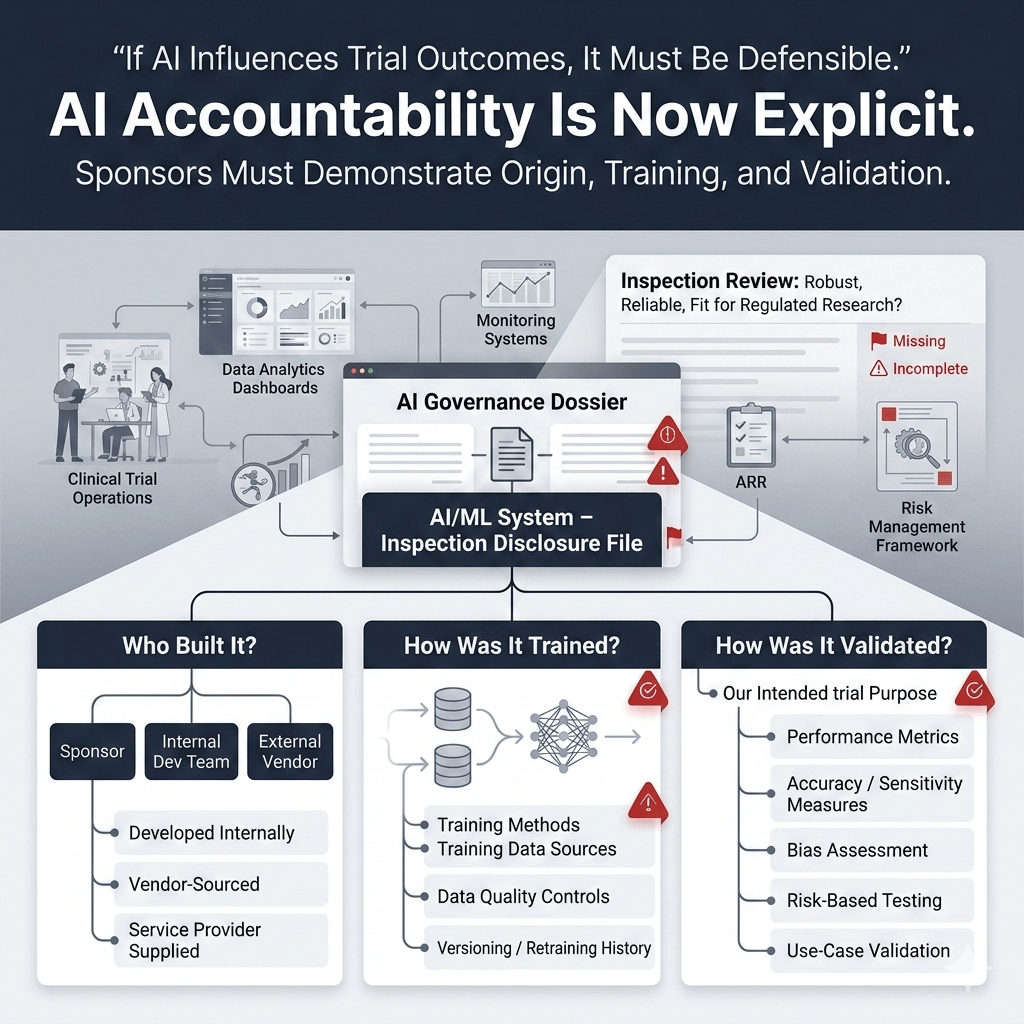

2.2. Development and Validation Transparency — “Know Where It Came From”

For each AI/ML system, sponsors must provide:

- Whether the system was developed internally or sourced from an external vendor

- Clarification on how the model was trained, including training methods and data sources

- Details about how the system was validated, i.e., how its performance and outputs were assessed for fitness for purpose

This reinforces accountability for AI systems that may impact trial outcomes, whether developed by the sponsor or provided by service providers, and ensures sponsors demonstrate that algorithmic tools are robust, reliable, and fit for regulated research.

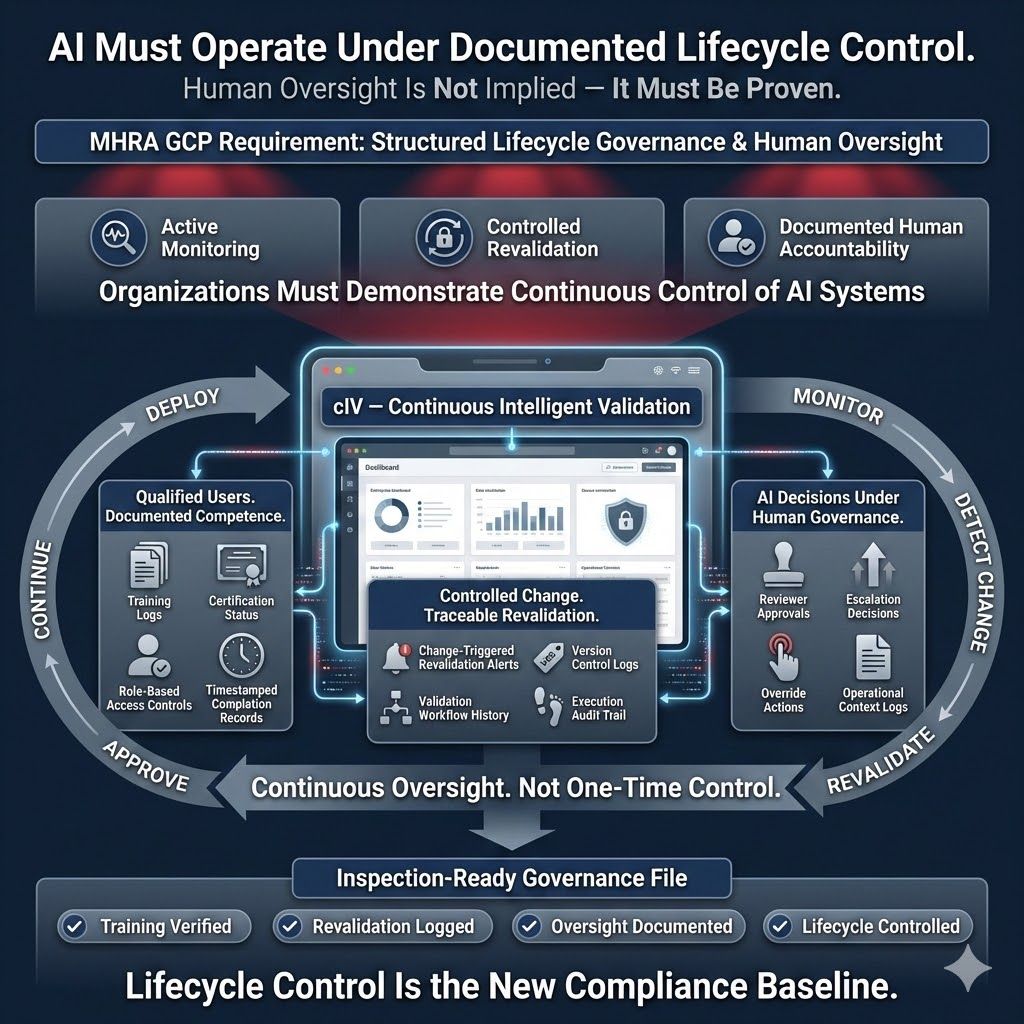

2.3. Documented Governance Processes — “Know How You Manage It”

Sponsors must demonstrate that their AI/ML systems are supported by structured lifecycle governance, including:

- Clear (re)validation processes, showing how AI systems are validated before use and re-validated upon change

- Documented user training, ensuring personnel understand system capabilities, limitations, and oversight responsibilities

- Defined operational controls, including how the system is used and how human oversight is applied

This requirement reflects the expectation that AI systems are transparent in regulated contexts, and humans must understand and control their use throughout the trial.

3.0. Bridging the Compliance Gap with Continuous Intelligent Validation (cIV)

The MHRA requirements challenge organizations to maintain comprehensive, auditable records of AI/ML use across complex trial workloads without overwhelming manual effort.

Continuous Intelligent Validation (cIV) becomes a powerful ally. cIV is not just automation, it is an AI-powered, integrated, end-to-end validation intelligence framework that transforms how regulated organizations plan, execute, and document validation evidence and lifecycle governance (@xLM Continuous Intelligence, AI in GxP Manufacturing, Continuous Intelligent Validation (cIV): From Months of Manual Validation to Minutes of Intelligent Execution).

3.1. Core Capabilities of cIV

1. Intelligent, end-to-end validation orchestration

cIV automates and orchestrates the entire validation lifecycle, including:

- Requirements capture, translating inputs into structured User Requirement Specifications (URS)

- Automated test case generation, deriving test plans directly from URS

- Execution and evidence capture, performing validation steps and logging results

By embedding traceability across artifacts (URS → risks → test cases → results), cIV builds comprehensive, audit-ready documentation automatically—a key differentiator from manual validation (@xLM Continuous Intelligence, AI in GxP Manufacturing, Continuous Intelligent Validation (cIV): From Months of Manual Validation to Minutes of Intelligent Execution)..

2. AI-generated, human-governed automation

cIV blends autonomous AI agents with controlled human oversight:

- AI agents generate content (e.g., URS, test scripts) and populate traceability matrices

- Humans review and approve outputs, ensuring human-in-the-loop governance aligns with regulatory expectations

- Reviewer decisions and approvals are logged for inspection readiness

This model ensures AI accelerates execution while humans retain accountability, a vital balance in regulated environments.

3. Continuous, scalable, modern validation

Unlike traditional one-time validation events:

- cIV enables continuous validation workflows, aligning with modern development lifecycles like Agile and DevOps

- Validation is not a bottleneck; it integrates into day-to-day execution

- Artifact generation and documentation become ongoing, not episodic

This continuous readiness is valuable in hybrid, cloud-native, and software-driven environments where traditional, static validation approaches struggle to scale.

4.0. How cIV Aligns with MHRA AI/ML Requirements

Here’s how cIV directly addresses each key regulatory expectation:

4.1. Inventory & Traceability

A foundational MHRA requirement is maintaining a clear, inspection-ready inventory of every AI/ML system influencing clinical trial delivery or oversight. Organizations must demonstrate what tools are in use, why, and for how long. cIV addresses this by:

- Automatically identifying and logging all AI/ML systems in use

- Recording each system’s functional purpose and operational timelines

- Establishing structured system metadata designed for regulatory dossiers

These capabilities support the MHRA’s expectation to document AI systems’ names, purposes, and duration of use in the GCP pre-inspection dossier.

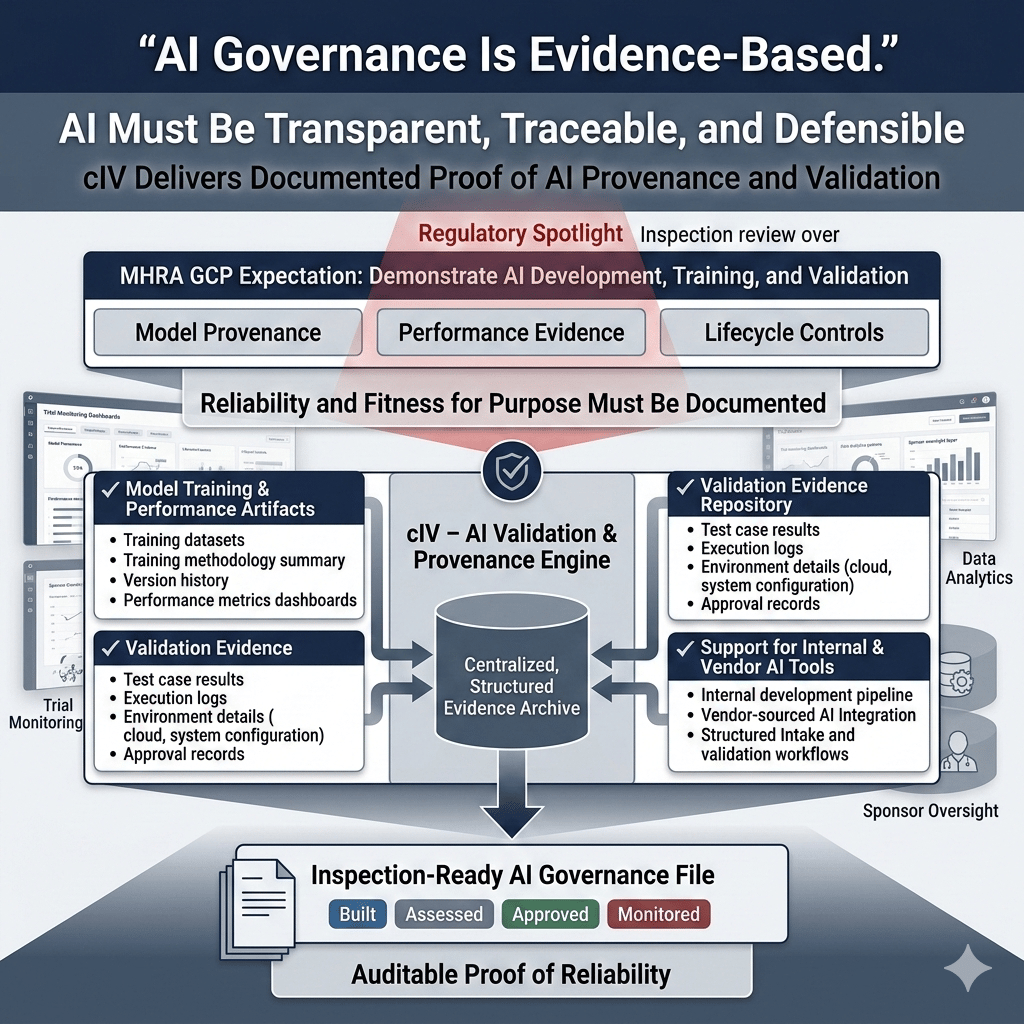

4.2. Provenance & Validation Lineage

MHRA expects sponsors to demonstrate how AI systems were developed, trained, and validated with documented evidence supporting their reliability and fitness for purpose. This requires transparency into model provenance, performance, and lifecycle controls. cIV enables this by:

- Capturing model training and performance artifacts

- Storing validation evidence, including test results and environment details

- Supporting both vendor-sourced and internally developed AI tools

These capabilities provide auditable proof of how AI systems were built, assessed, and approved for use within clinical trial operations.

4.3. Governance & Human Oversight Documentation

MHRA requires demonstrating that AI/ML systems operate under structured lifecycle controls and meaningful human oversight. Regulators expect evidence that organizations actively manage, monitor, and control these systems throughout clinical trials. cIV supports this by:

- Logging user training activities and maintaining competency records

- Tracking re-validation triggers and maintaining execution history

- Documenting human oversight decisions and operational context

These capabilities provide documented proof of controlled system management, ensuring AI tools operate within defined governance frameworks aligned with regulatory expectations.

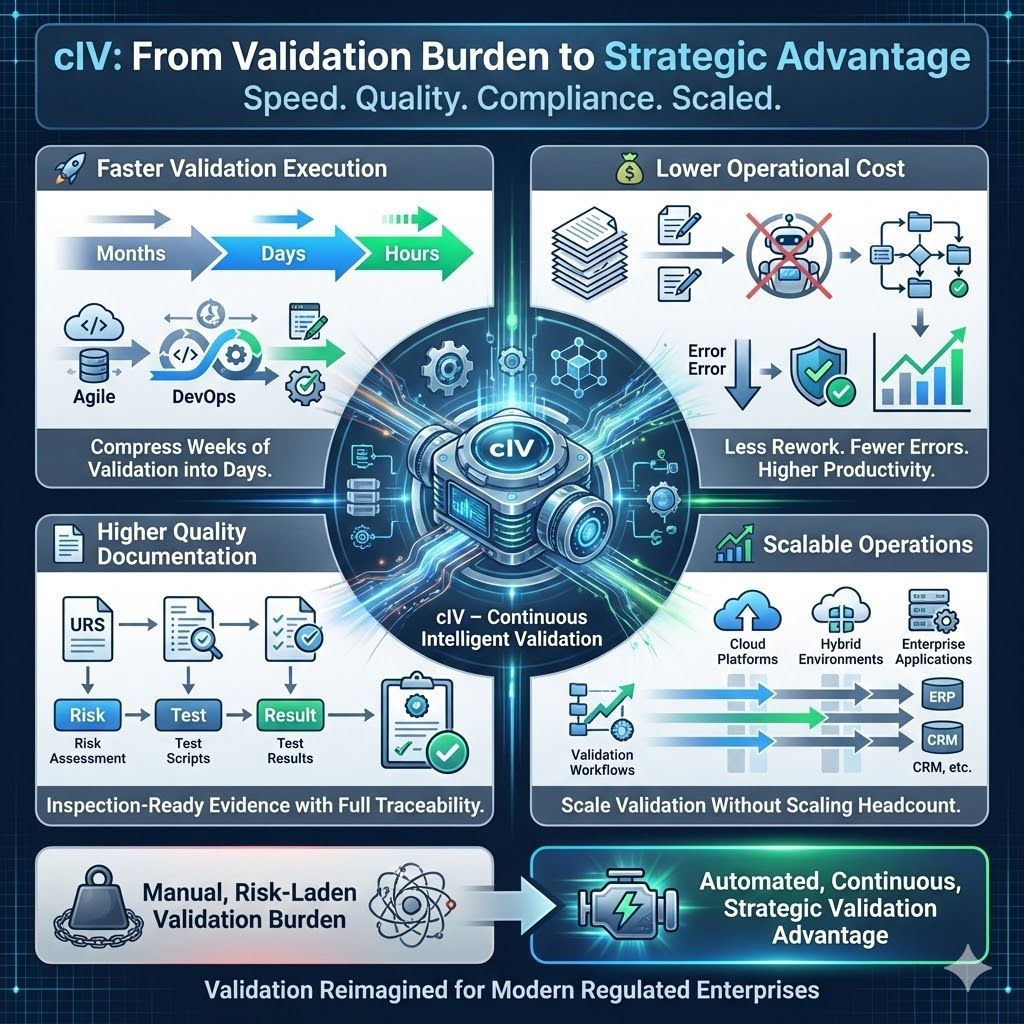

5.0. Business Impact Beyond Regulatory Compliance

cIV delivers measurable business value beyond regulatory compliance:

- Faster validation execution — Compressing weeks or months of work into hours significantly accelerates project timelines and improves efficiency.

- Lower operational cost — Reducing manual effort and rework boosts productivity and cuts errors, streamlining workflows and improving quality by getting tasks right the first time.

- Higher quality documentation — Audit-ready evidence with full traceability ensures organizations prove compliance during audits.

- Scalable operations — Validation workflows scale across platforms and organizational functions, ensuring consistent, reliable validation and smooth integration.

In a world where speed, quality, and compliance matter, cIV transforms validation from a risk-laden burden into a strategic advantage (@xLM Continuous Intelligence, AI in GxP Manufacturing, Continuous Intelligent Validation (cIV): From Months of Manual Validation to Minutes of Intelligent Execution)..

6.0. Final Thoughts: AI Governance Is Now a Regulatory Requirement

The MHRA’s update underscores a broader trend:

AI governance in regulated environments is no longer optional or peripheral; it must be systematically documented, controlled, and auditable.

Continuous Intelligent Validation (cIV) provides a future-fit framework that helps organizations meet new UK regulatory requirements and accelerates their ability to innovate with confidence and compliance at the speed of AI.

7.0. Related Articles

share this