AI Governance in GxP: From Principles to Practice

Explore AI governance in GxP from principles to practice. Learn how to ensure traceability, validation, auditability, and human oversight in submissions.

share this

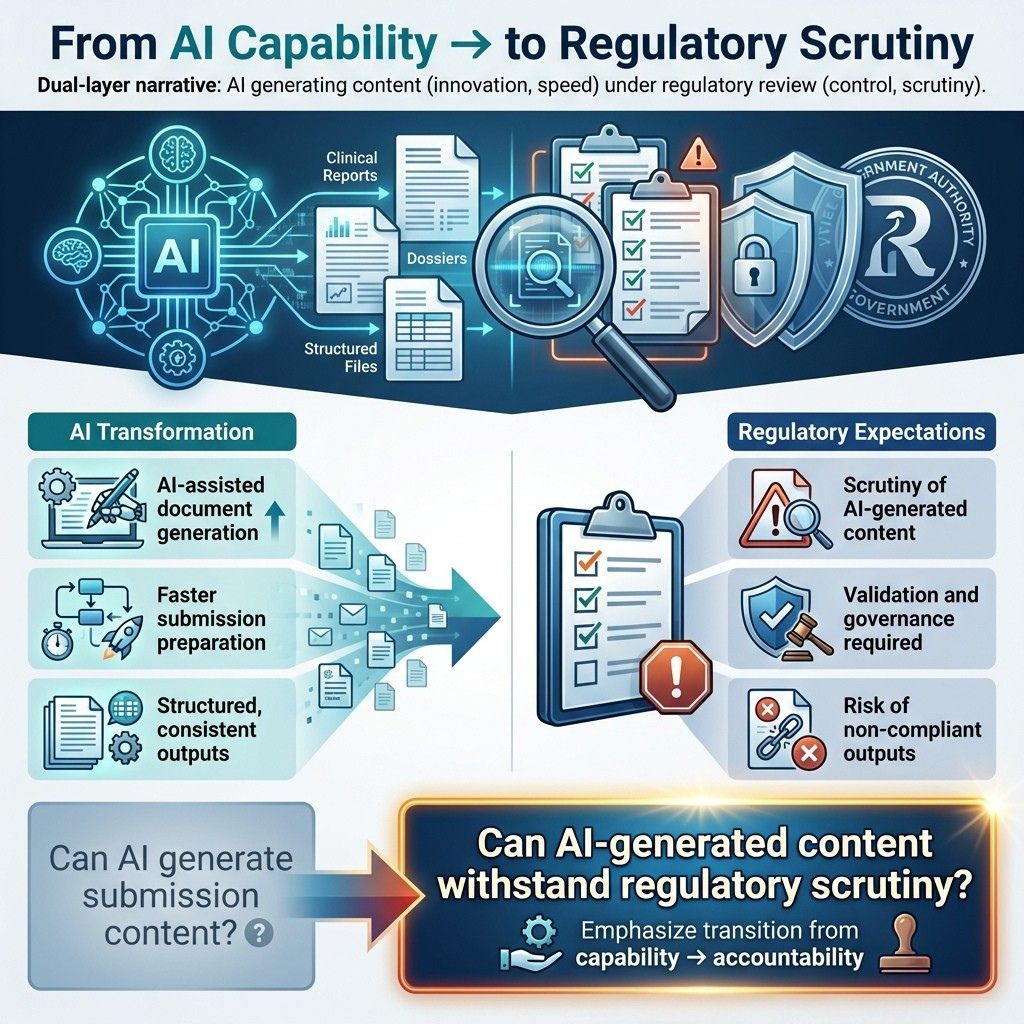

1.0. Preparing for AI-Assisted Regulatory Submissions

In Part 2 (this newsletter), we focus on where this transformation becomes tangible:

Regulatory submissions are becoming AI-assisted.

This is not just a productivity shift; it is a compliance inflection point.

The question is no longer:

Can AI generate submission content?

It is:

Can AI-generated content withstand regulatory scrutiny?

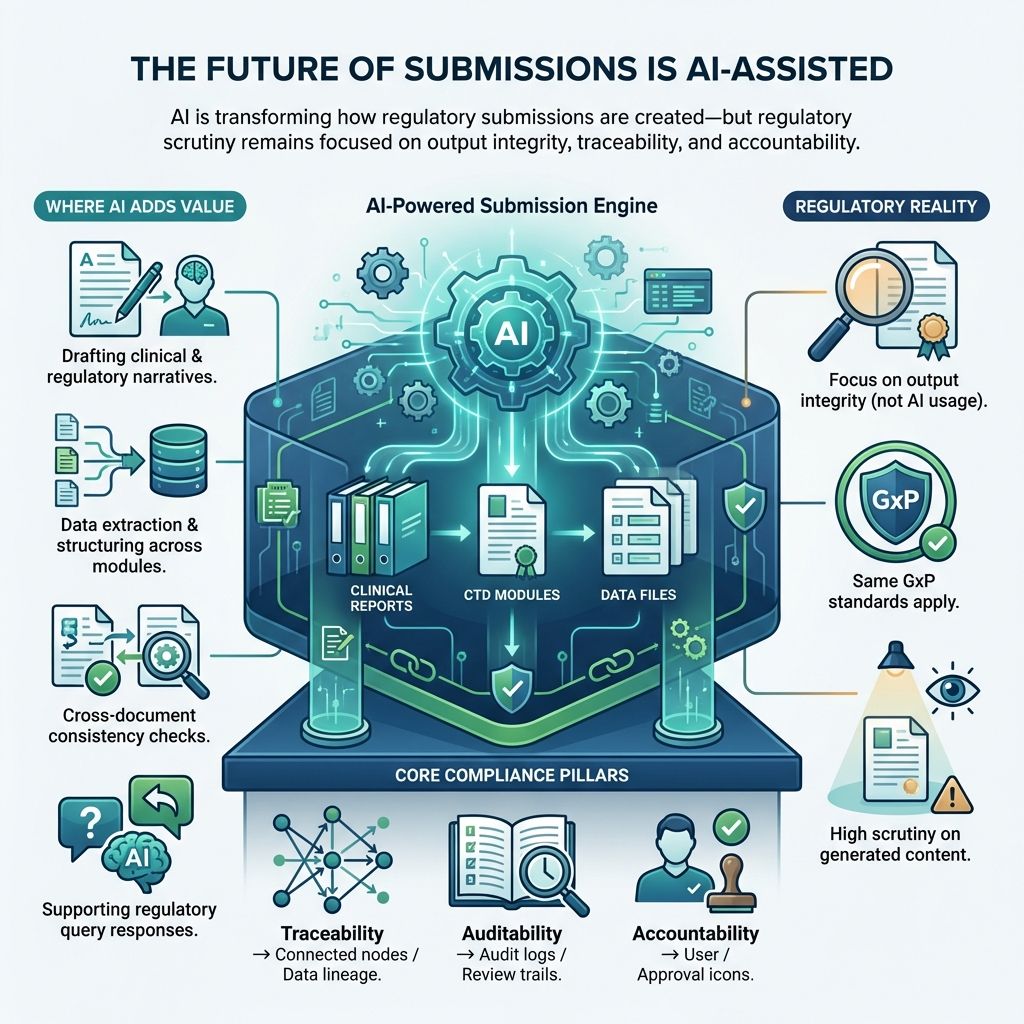

2.0. The Future of Regulatory Submissions Is AI-Assisted

AI is already embedded in submission workflows:

- Drafting clinical and regulatory narratives

- Extracting and structuring data across modules

- Automating cross-document consistency checks

- Supporting responses to regulatory queries

This changes how submissions are created.

Regulators do not evaluate the use of AI. They evaluate the integrity of the output.

AI-assisted submissions must meet the same rigorous standards as any GxP-controlled process, including full traceability so every step and decision can be tracked and verified. Auditability allows thorough review and inspection. Accountability ensures responsible parties are clearly identified and answerable for the data and actions.

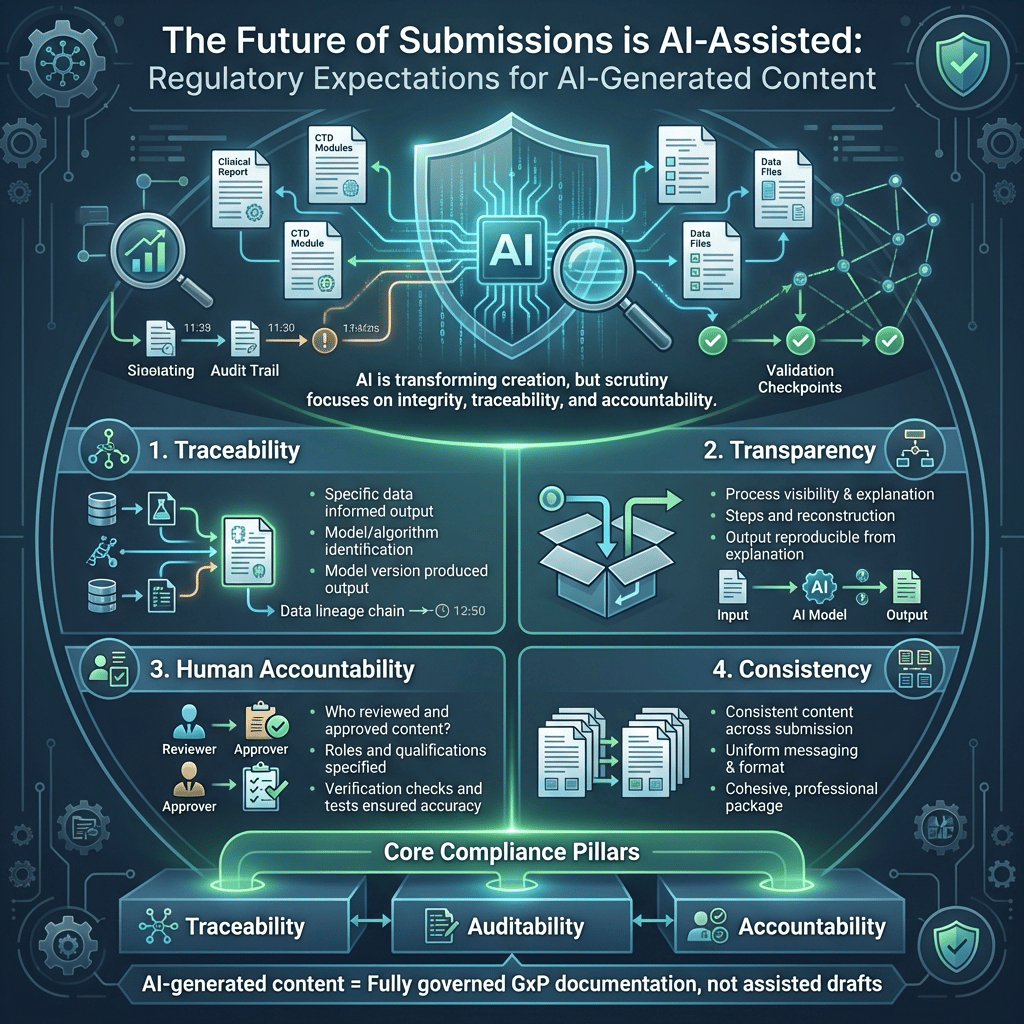

3.0. Regulatory Expectations for AI-Generated Content

AI adds complexity to regulatory documentation. Regulators expect organizations to demonstrate:

3.1. Traceability

- What specific data informed and supported the output?

- Which model or algorithm generated this output?

- What exact model version produced this output?

3.2. Transparency

- How was the output created? Explain the process and steps involved.

- Can it be explained and reconstructed? Confirm if the output and process can be fully reproduced from that explanation.

3.3. Human Accountability

- Who reviewed and approved the content? Specify individuals or teams, their roles, and qualifications.

- What verification was done? Describe checks, tests, or validations ensuring accuracy and reliability.

3.4. Consistency

- Is the content consistent across all submission documents? Ensure uniform messaging, format, and key details for a cohesive, professional package.

AI-generated content must be treated as fully governed GxP documentation, not assisted drafts.

4.0. Key AI Risks Regulators Focus On

The risks are familiar but AI amplifies them:

- Hallucinations - Convincing but false statements causing misinformation despite credibility.

- Data integrity gaps - Issues from biased or unverified input data reducing accuracy and trust.

- Model drift - Output changes over time due to data or condition shifts, lowering performance if unchecked.

- Lack of explainability - Inability to clearly explain decisions, reducing user trust and understanding.

- Over-automation - Excessive automation reducing human oversight, risking missed errors and less accountability.

These map directly to core GxP concerns: data integrity, validation, and accountability.

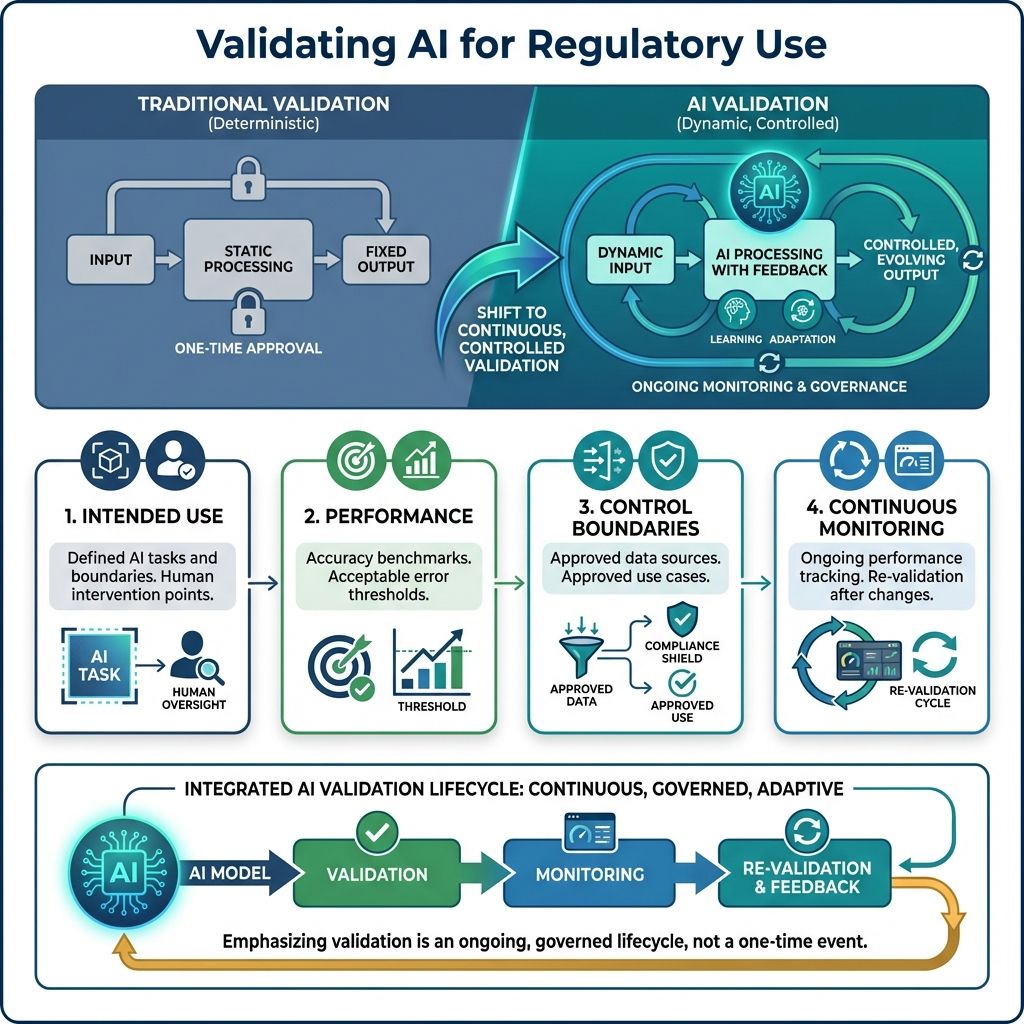

5.0. Validating AI for Regulatory Use

Traditional validation assumes deterministic systems; AI requires a different approach.

Organizations must validate:

5.1. Intended Use

Clear definitions of:

- AI's allowed autonomous tasks and actions.

- Where human intervention is required, specifying when judgment, oversight, or approval ensures accuracy, safety, and ethics.

5.2. Performance

- Accuracy benchmarks set standards to measure system performance and precision, ensuring consistent quality.

- Acceptable error thresholds define maximum allowed errors, maintaining integrity while permitting minor deviations.

5.3. Control Boundaries

- Use only approved data sources reviewed and authorized to ensure compliance and data integrity.

- Use only approved use cases aligned with organizational policies and goals.

5.4. Continuous Monitoring

- Ongoing performance tracking keeps the system efficient by monitoring metrics and trends to spot issues early.

- Re-validation after model or data changes ensures accuracy and alignment with goals.

6.0. Operationalizing AI in Regulatory Teams

To move from experimentation to production, embed AI into:

- Submission authoring workflows that gather, organize, and format information properly.

- Review and approval processes with multiple stages to check accuracy, relevance, and compliance before final approval.

- Quality and compliance systems maintaining standards during the submission lifecycle to avoid errors and ensure consistency.

This requires:

- Clear ownership ensuring responsibilities and accountability are assigned to specific individuals or teams.

- Controlled workflows managed to maintain consistency and efficiency, minimizing errors and delays.

- Integrated governance mechanisms enabling oversight and compliance with policies and regulations seamlessly.

7.0. Operationalizing AI Governance with cIV

This is where principles from Sections 1–6 translate into execution.

While organizations understand the need for traceability, validation, and governance, most struggle to operationalize these consistently across GxP systems and AI-enabled workflows.

cIV bridges this gap by embedding validation, governance, and control directly into the lifecycle of both traditional GxP software and AI-driven systems.

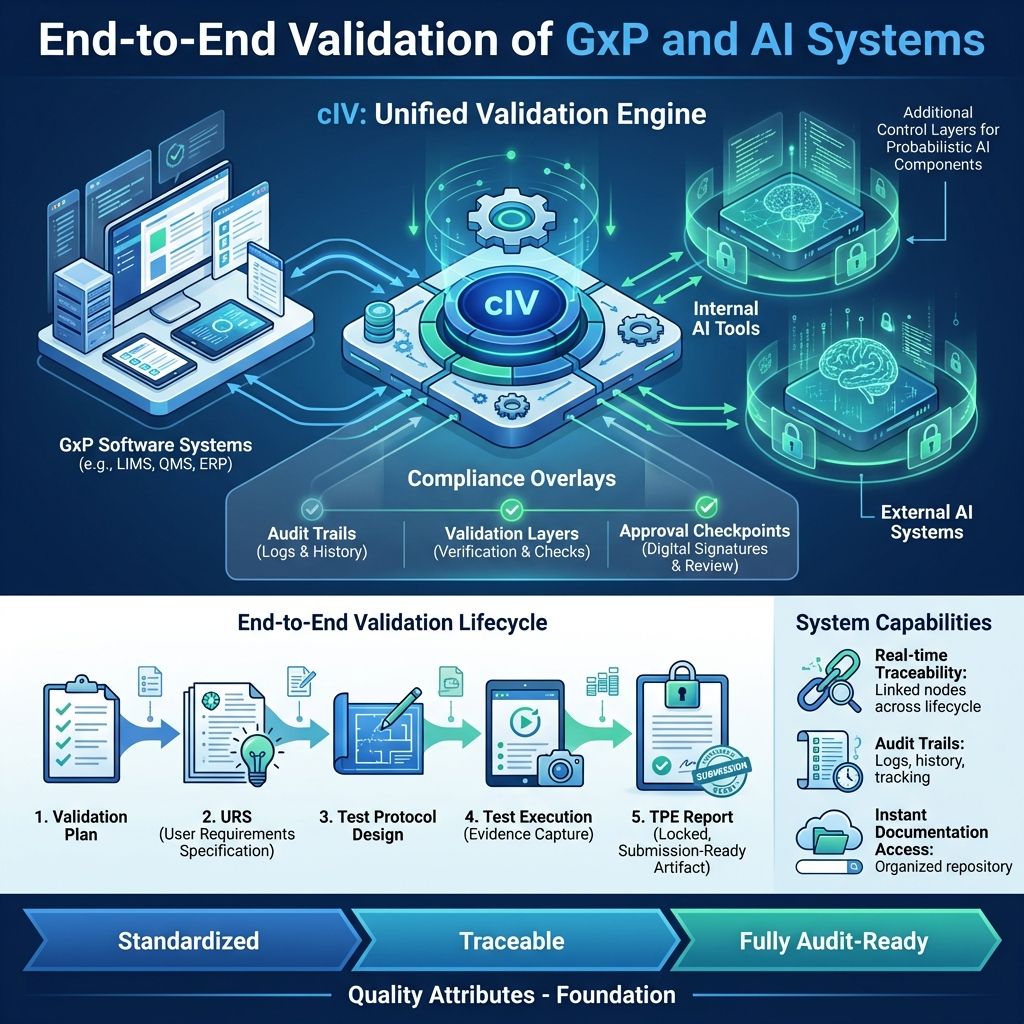

7.1. End-to-End Validation of GxP and AI Systems

cIV enables companies to validate:

- GxP software applications

- AI tools developed internally

- External AI systems integrated into workflows

It provides a structured, end-to-end validation lifecycle, ensuring every system deterministic or AI-driven that meets regulatory expectations.

This includes:

- Validation Plan creation aligned with intended use and risk classification

- User Requirement Specification (URS) generation with clear, testable requirements

- Test Protocol design covering functional, compliance, and risk scenarios

- Automated and guided test execution with documented evidence

- GxP-compliant Test Protocol Execution Report (TPE) generation as a locked, submission-ready artifact, ensuring integrity, traceability, and readiness for regulatory review

- Real-time traceability linkage between requirements, design, test cases, and executed tests provides complete, auditable oversight.

- Complete audit trails and comprehensive documentation packages are instantly accessible for any regulatory inspection, anytime.

Each stage is:

- Standardized - The processes and procedures have been thoroughly standardized to ensure consistency and uniformity across all operations.

- Traceable - Every action and change is fully traceable, allowing for clear tracking and accountability throughout the entire process.

- Fully audit-ready - The system is completely prepared for audits, meeting all necessary compliance standards and documentation requirements to facilitate smooth auditing procedures

AI is not treated as an exception; it is validated with the same rigor as any GxP-critical system, with additional controls for its probabilistic nature embedded into the process.

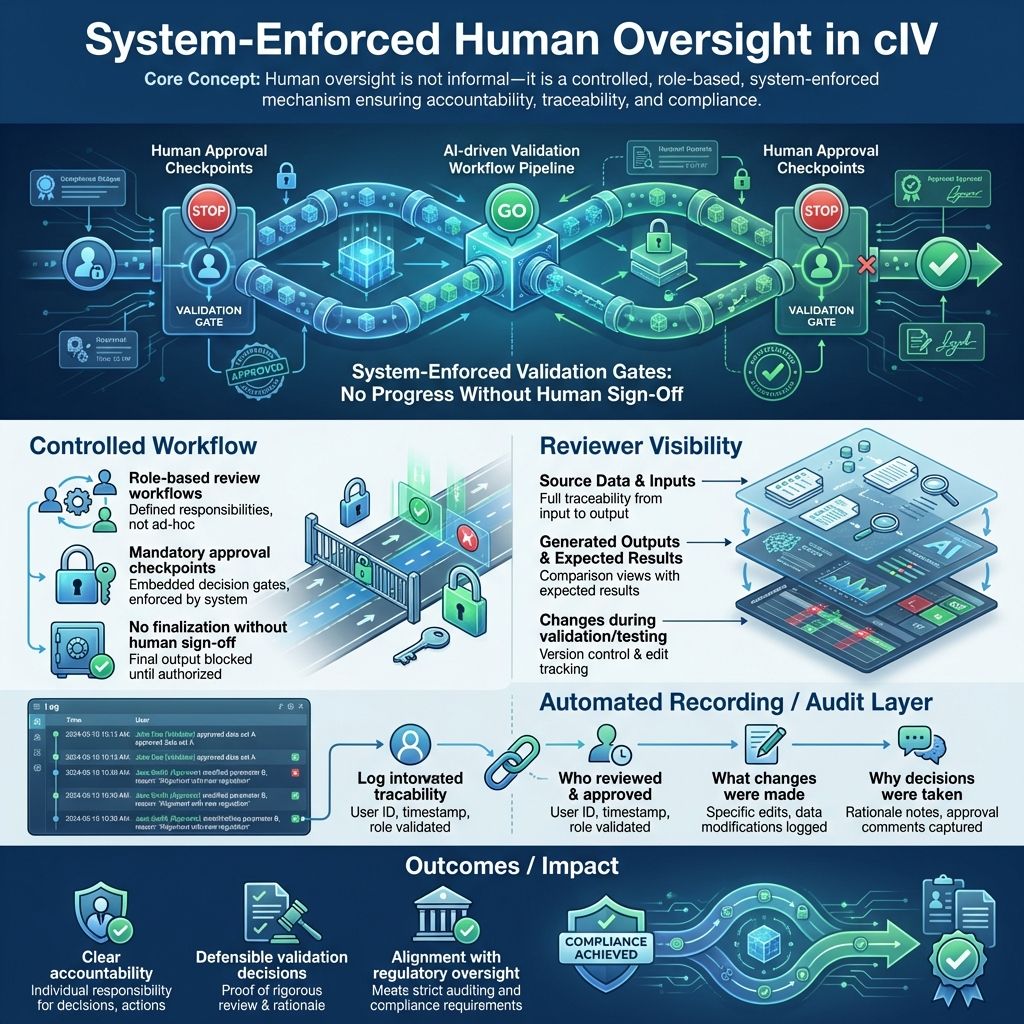

7.2. Built-In Human-in-the-Loop Governance

cIV embeds human oversight as a controlled, system-enforced mechanism, not an informal step.

- Every validation artifact and AI-generated output passes through role-based review workflows

- No document or test outcome is finalized without explicit human approval checkpoints

- Reviewers have full visibility into:

- Source data and inputs

- Generated outputs and expected results

- Changes made during validation and testing

The system automatically records:

- Who reviewed and approved

- What changes were made

- Why decisions were taken

This ensures:

- Clear accountability ensures each person or team knows their duties, preventing confusion and overlap, and easing progress tracking and issue resolution.

- Defensible validation decisions uphold integrity and trust by being well-supported with evidence and reasoning, able to withstand stakeholder or audit challenges.

- Aligning with regulatory oversight ensures compliance with laws and guidelines, avoiding penalties and showing commitment to ethical standards

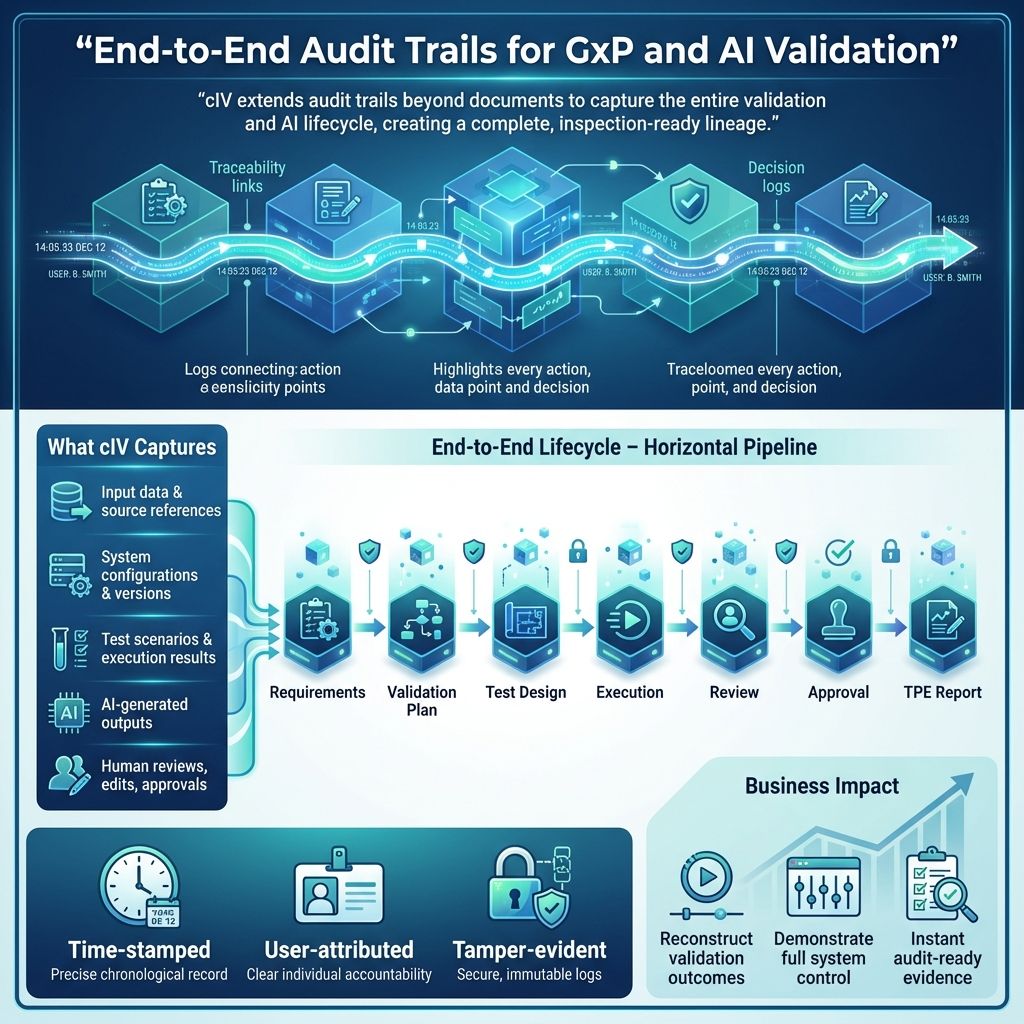

7.3. Comprehensive Audit Trails and Traceability

cIV extends audit trails beyond traditional document tracking to cover the entire validation and AI lifecycle.

It captures:

- Input data and source references

- System configurations and versions

- Test scenarios and execution results

- AI-assisted outputs (where applicable)

- Human reviews, edits, and approvals

This creates a complete, inspection-ready lineage:

Requirements → Validation Plan → Test Design → Execution → Review → Approval → TPE Report

This sequence outlines the full testing and validation workflow. It starts with defining Requirements, the project's foundation, followed by creating a Validation Plan detailing strategies to ensure these requirements are met.

Next, Test Design creates specific cases from the plan to assess the system. Then, Execution runs these tests to verify functionality and performance.

After testing, Review analyzes results for issues or deviations. Approval then secures formal acceptance from stakeholders, confirming standards are met.

Finally, the TPE Report documents the testing, findings, and approvals, serving as a key record for compliance and future improvements

All records are:

- Time-stamped

- User-attributed

- Tamper-evident

This allows organizations to:

- Reconstruct how any validation outcome was achieved

- Demonstrate full control over systems and AI usage

- Provide instant, audit-ready evidence during inspections

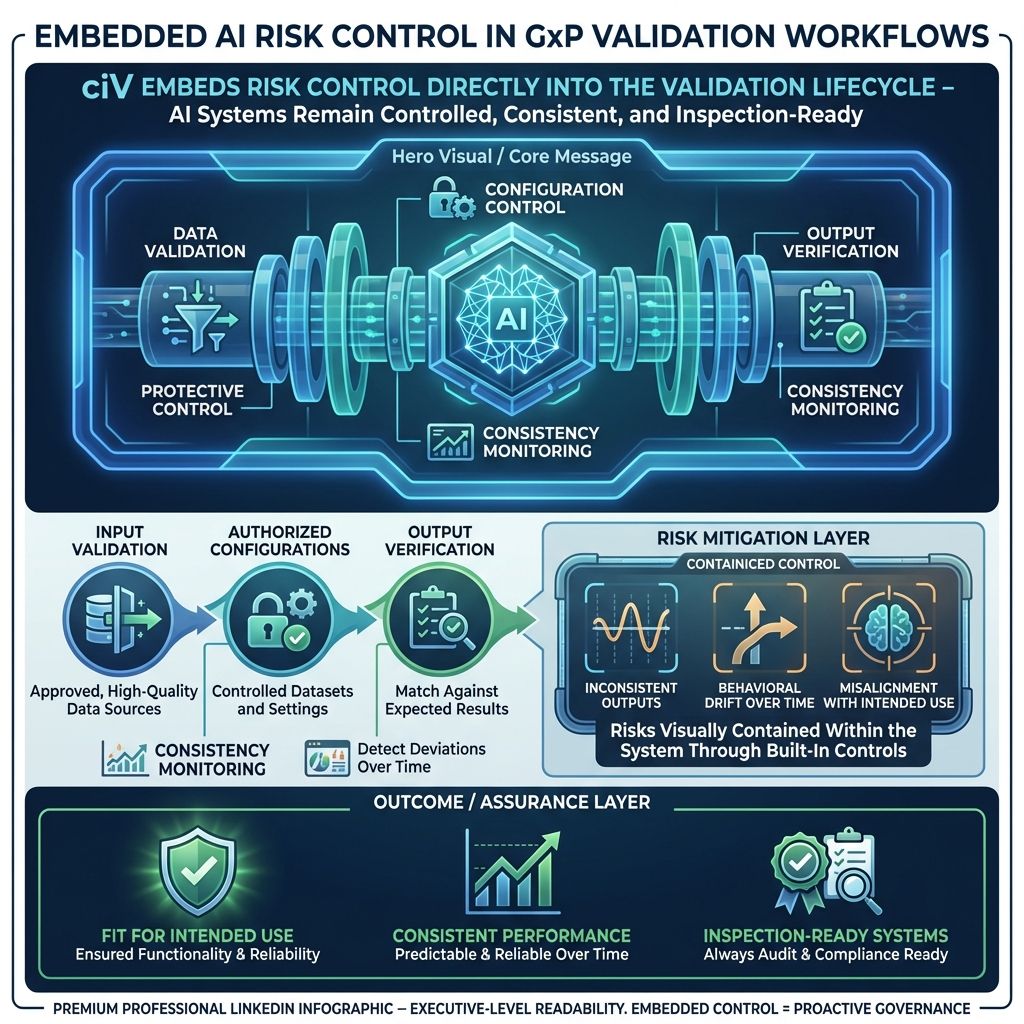

7.4. Continuous Control Over AI Behavior Within Validation

cIV ensures AI systems used within GxP environments remain controlled and reliable as part of the validation process itself.

Instead of treating AI risks separately, cIV embeds controls directly into validation workflows by:

- Validating input data sources to ensure only approved, high-quality data is used

- Enforcing use of authorized datasets and configurations

- Verifying outputs against expected results and acceptance criteria

- Continuously tracking consistency across executions to detect deviations

By governing inputs, sources, and outputs together, cIV manages risks such as:

- Inconsistent or unreliable outputs

- Undetected changes in behavior over time

- Misalignment between intended use and actual system performance

This ensures AI-driven systems remain fit for intended use, consistent, and inspection-ready throughout their lifecycle.

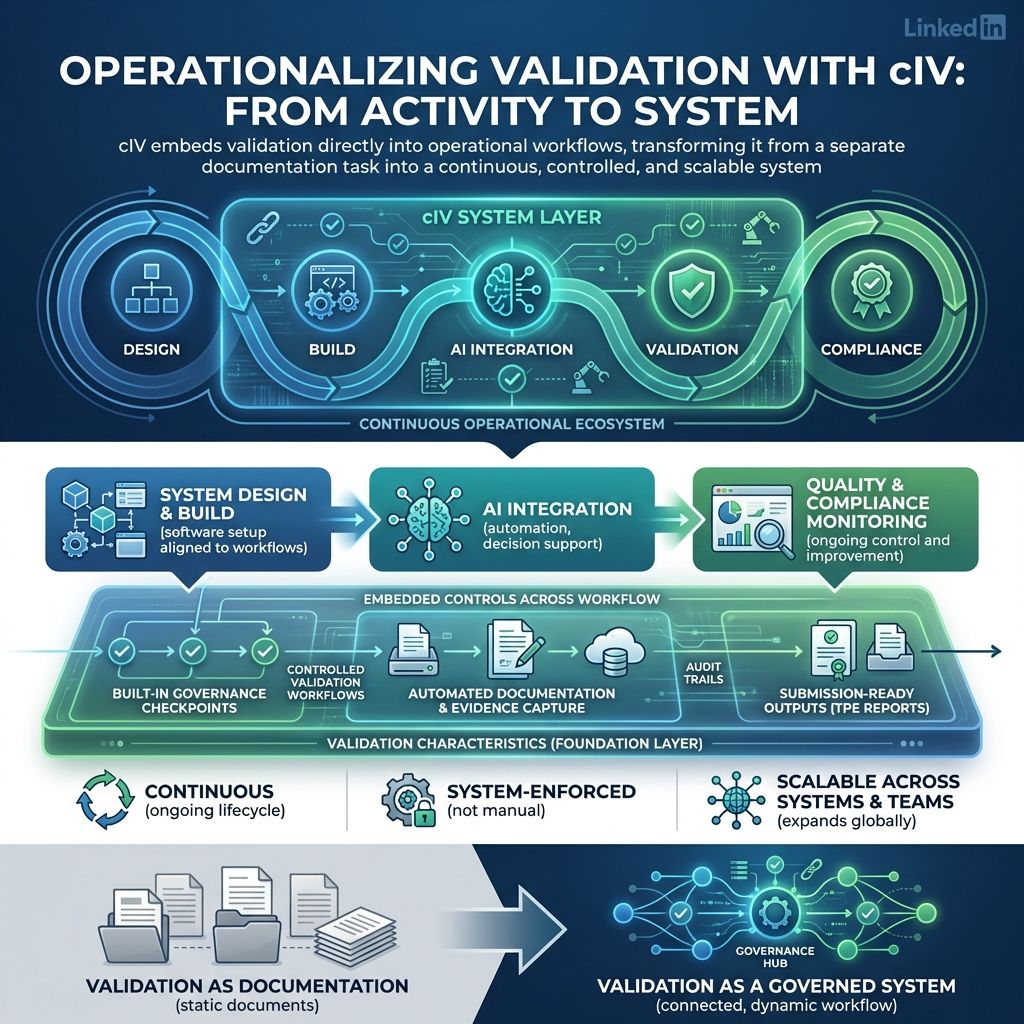

7.5. Embedding Validation into GxP Workflows

cIV integrates validation directly into operational workflows, rather than treating it as a separate activity.

It connects seamlessly with:

- Designing, building, and setting up software to fit organizational needs and workflows.

- Adding AI tools into systems and using them to boost productivity, automate tasks, and improve decisions.

- Ensuring products meet standards and regulations through ongoing monitoring and improvement

With:

- Built-in governance checkpoints

- Controlled workflows across validation stages

- Automated documentation and evidence capture

- Seamless generation of submission-ready validation outputs, including TPE reports, for regulatory filings

This ensures validation is:

- Ongoing, not a one-time event, ensuring steady progress and lasting improvement.

- Enforced systematically, not relying on manual effort. Automation and clear policies keep consistency and reduce errors.

- Scalable across systems and teams, ensuring wide use and supporting growth without quality loss.

With cIV, validation is no longer a documentation exercise.

It becomes a controlled, traceable, and continuously governed system ensuring both GxP software and AI tools meet the highest standards of regulatory compliance.

8.0. Closing Insight

The future of regulatory submissions is not just AI-assisted; it is fully AI-governed. AI will lead and manage the entire submission workflow.

Success depends on one critical capability:

The ability to combine automation with accountability seamlessly and reliably.

This shifts from using AI to enhance tasks…

to using AI regulators can fully trust and depend upon. It establishes transparent, auditable, and compliant AI processes meeting stringent regulatory standards.

9.0. Related Posts

share this